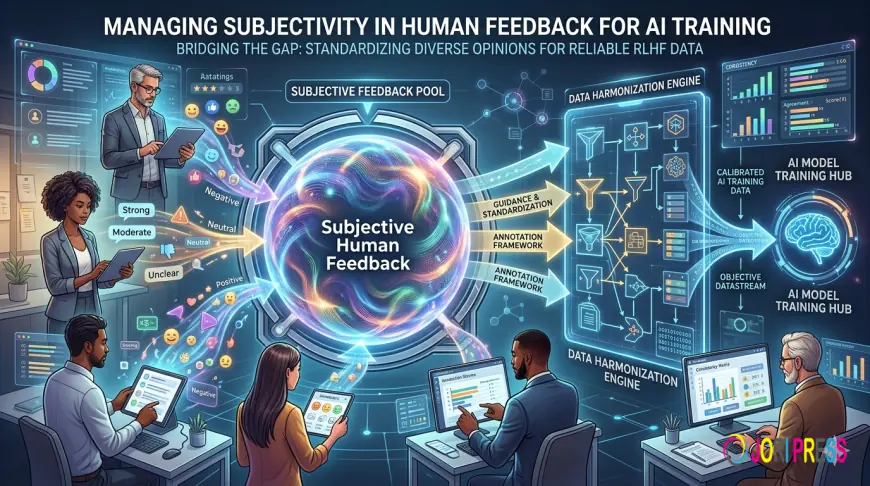

Managing Subjectivity in Human Feedback for AI Training

Learn how a data annotation company manages subjectivity in RLHF Annotation Services, improving consistency, reducing bias, and enhancing LLM performance through high-quality training data.

As artificial intelligence systems grow more sophisticated, the demand for high-quality human feedback has never been greater. In particular, Reinforcement Learning from Human Feedback (RLHF) has become a cornerstone for aligning large language models (LLMs) with human expectations. However, one persistent challenge remains: subjectivity. Human judgment, while valuable, is inherently variable—shaped by individual perspectives, cultural contexts, and cognitive biases. For any data annotation company, effectively managing this subjectivity is critical to delivering consistent, scalable, and high-quality training data.

This article explores how organizations can systematically address subjectivity in human feedback pipelines, ensuring that RLHF Annotation Services produce reliable outcomes that directly enhance model performance.

Understanding Subjectivity in AI Training

Subjectivity in human feedback arises when annotators interpret tasks differently or apply personal judgment inconsistently. Unlike objective tasks—such as labeling objects in images—RLHF often involves evaluating tone, helpfulness, factual accuracy, or ethical alignment. These dimensions are inherently nuanced.

For example, when ranking AI-generated responses, one annotator may prioritize completeness, while another emphasizes conciseness. Without structured controls, such variation introduces noise into the dataset, which can degrade model learning.

This is where understanding How High-Quality Training Data Impacts LLM Performance becomes essential. Models trained on inconsistent or biased feedback may exhibit unpredictable behavior, reduced generalization, and alignment failures.

The Impact of Subjectivity on RLHF Outcomes

Unchecked subjectivity can have several downstream consequences:

- Inconsistent Model Behavior: Conflicting annotations lead to ambiguous reward signals during training.

- Bias Amplification: Annotator biases—cultural, linguistic, or ideological—can propagate into the model.

- Reduced Scalability: High disagreement rates slow down annotation throughput and increase costs.

- Evaluation Challenges: Difficulty in benchmarking model improvements due to lack of annotation consistency.

For organizations investing in data annotation outsourcing, these risks can undermine both efficiency and ROI if not proactively managed.

Strategies to Manage Subjectivity

Effectively managing subjectivity requires a multi-layered approach that combines process design, annotator training, and quality assurance mechanisms.

1. Designing Clear Annotation Guidelines

The foundation of consistency lies in well-defined guidelines. Annotation instructions should:

- Break down abstract concepts (e.g., “helpfulness”) into measurable criteria

- Provide examples of both ideal and poor responses

- Include edge cases and boundary conditions

Instead of vague directives, guidelines should operationalize judgment. For instance, rather than asking annotators to select the “better” response, specify dimensions such as accuracy, relevance, tone, and safety.

A mature data annotation company invests significant effort into iterative guideline development, refining them based on annotator feedback and observed inconsistencies.

2. Calibration and Training Programs

Even with strong guidelines, annotators interpret tasks differently without proper calibration. Regular training sessions help align annotators’ mental models.

Effective calibration includes:

- Gold Standard Tasks: Pre-labeled examples used to benchmark annotator decisions

- Consensus Discussions: Reviewing disagreements to clarify expectations

- Feedback Loops: Providing annotators with performance insights

Calibration is not a one-time activity—it must be continuous, especially in large-scale RLHF Annotation Services where teams are distributed and evolving.

3. Leveraging Multi-Annotator Consensus

Relying on a single annotator increases the risk of subjective bias. Instead, multiple annotators should evaluate the same data points, with aggregation methods such as:

- Majority voting

- Weighted scoring based on annotator reliability

- Adjudication by expert reviewers

This approach reduces individual bias and improves signal reliability. While it increases upfront cost, it significantly enhances downstream model quality—reinforcing how high-quality training data impacts LLM performance.

4. Implementing Robust Quality Assurance Frameworks

Quality assurance (QA) is critical in controlling subjectivity at scale. A strong QA framework includes:

- Inter-Annotator Agreement (IAA) Metrics: Measuring consistency across annotators

- Random Sampling Audits: Periodic checks of annotation quality

- Error Taxonomy Tracking: Identifying recurring patterns of disagreement

By quantifying subjectivity, organizations can proactively address it rather than reactively correcting errors.

For companies leveraging data annotation outsourcing, QA frameworks also ensure vendor accountability and standardized output quality.

5. Role of Domain Expertise

Not all annotation tasks are equal. Complex domains—such as healthcare, legal, or finance—require subject matter expertise to reduce ambiguity.

For example, evaluating the factual correctness of a medical response demands domain knowledge that general annotators may lack. Incorporating expert annotators or layered review systems ensures higher accuracy and reduces subjective misinterpretation.

6. Cultural and Linguistic Sensitivity

Subjectivity is often influenced by cultural and linguistic context. A response considered polite in one culture may be perceived differently in another.

To address this:

- Build diverse annotation teams

- Localize guidelines for regional contexts

- Incorporate cross-cultural review mechanisms

This is particularly important for global AI systems, where alignment must reflect a broad spectrum of user expectations.

7. Tooling and Workflow Optimization

Advanced annotation platforms can help standardize decision-making and reduce subjectivity. Features include:

- Structured evaluation forms with predefined criteria

- Real-time validation checks

- Annotator performance dashboards

These tools guide annotators toward consistent outputs while enabling managers to monitor quality trends.

Leading providers of RLHF Annotation Services invest in such tooling to ensure both efficiency and reliability.

Balancing Subjectivity and Human Judgment

It is important to recognize that subjectivity is not inherently negative. In fact, human judgment is what enables AI systems to align with nuanced human values. The goal is not to eliminate subjectivity, but to standardize and guide it.

A well-managed feedback system captures the richness of human evaluation while minimizing noise and inconsistency. This balance is what differentiates high-performing AI models from those that struggle with alignment.

The Role of Strategic Annotation Partnerships

For many organizations, managing subjectivity internally can be resource-intensive. This is why businesses increasingly turn to data annotation outsourcing.

A specialized data annotation company brings:

- Established QA frameworks

- Pre-trained and calibrated annotator pools

- Scalable infrastructure for large datasets

- Continuous process optimization

By partnering with experienced providers, companies can focus on model development while ensuring that their training data remains consistent and high-quality.

Conclusion

Managing subjectivity in human feedback is one of the most complex challenges in AI training. However, with the right combination of structured guidelines, continuous calibration, multi-annotator validation, and robust QA systems, it is entirely manageable.

At Annotera, we understand that the effectiveness of RLHF Annotation Services depends on the precision and consistency of human input. By combining domain expertise, advanced tooling, and rigorous quality controls, we help organizations transform subjective human judgments into reliable training signals.

Ultimately, the success of any AI system hinges on the quality of the data it learns from. And as the industry continues to evolve, mastering subjectivity will remain central to unlocking the full potential of intelligent systems.

What's Your Reaction?

Like

0

Like

0

Dislike

0

Dislike

0

Love

0

Love

0

Funny

0

Funny

0

Angry

0

Angry

0

Sad

0

Sad

0

Wow

0

Wow

0